attentionThis feature is being deprecated. If you're using Grafana Cloud k6, we recommend using Data source plugins for Grafana to visualize and correlate your APM and k6 metrics.

With this integration, you can export test-result metrics from the k6 Cloud to Datadog, where you can visualize and correlate k6 metrics with other monitored metrics.

⭐️ Cloud APM integrations are available on Pro and Enterprise plans, as well as the annual Team plan and Trial.

Necessary Datadog settings

To set up the integration on the k6 Cloud, you need the following Datadog settings:

- API key

- Application key

To get your keys, follow the Datadog documentation: "API and Application Keys".

Supported Regions

The supported regions for the Datadog integration are us/us1 (default), eu/eu1, us3, us5, us1-fed.

API and Application keys for a Datadog region won't work on a different region.

Export k6 metrics to Datadog

You must enable the Datadog integration for each test whose metrics you want to export.

After you set up the Datadog settings in the test, you can run a cloud test as usual. As the test runs, k6 Cloud will continuously send the test results metrics to Datadog.

Currently, there are two options to set up the Cloud APM settings in the test:

Configure Datadog export with the test builder

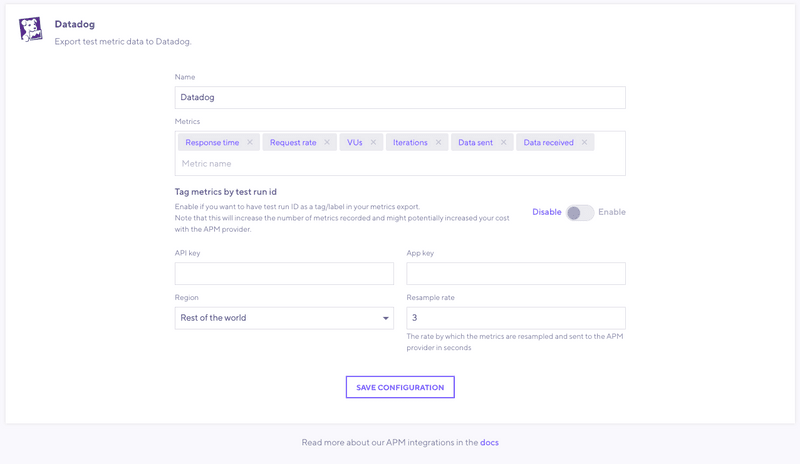

First, configure the Datadog integration for an organization.

From the Main navigation, go to Manage > Cloud APM and select Datadog.

In this form, set the API and application keys that you copied previously from Datadog.

For more information on the other input fields, see configuration parameters.

Save the Datadog configuration for the current organization.

Note that configuring the Datadog settings for an organization does not enable the integration. You must manually enable each test using the test builder.

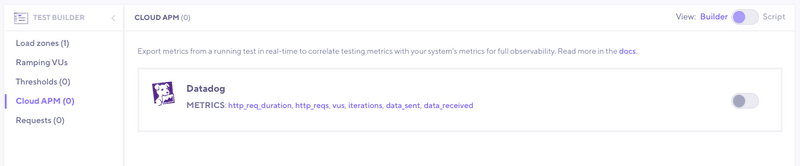

Create a new test with the test builder, or select an existing test previously created using the test builder.

Select the cloud apm option on the test builder sidebar to enable the integration for the test.

Configuration in the k6 script

If you script your k6 tests, you can also configure the Cloud APM settings using the apm option in the k6 script.

The parameters to export the k6 metrics to Datadog are as follows:

Configuration parameters

| Name | Description |

|---|---|

| provider(required) | For this integration, the value must be datadog. |

| apiKey(required) | Datadog API key. |

| appKey(required) | Datadog application key. |

| region | One of Datadog regions/sites. See the list of supported regions. Default is us. |

| apiURL | Alternative to region. URL of Datadog Site API. Included for support of possible new or custom Datadog regions. Default is picked according to region, e.g. 'https://api.datadoghq.com' for us. |

| includeDefaultMetrics | If true, add default APM metrics to export: data_sent, data_received, http_req_duration, http_reqs, iterations, and vus. Default is true. |

| metrics | List of metrics to export. A subsequent section details how to specify metrics. |

| includeTestRunId | Whether all the exported metrics include a test_run_id tag whose value is the k6 Cloud test run id. Default is false. Be aware that enabling this setting might increase the cost of your APM provider. |

| resampleRate | Sampling period for metrics in seconds. Default is 3 and supported values are integers between 1 and 60. |

Metric configuration

Each entry in metrics parameter can be an object with following keys:

| Name | Description |

|---|---|

| sourceMetric(required) | Name of k6 builtin or custom metric to export, optionally with tag filters. Tag filtering follows Prometheus selector syntax, Example: http_reqs{name="http://example.com",status!="500"} |

| targetMetric | Name of resulting metric in Datadog. Default is the name of the source metric with a k6. prefix Example: k6.http_reqs |

| keepTags | List of tags to preserve when exporting time series. |

keepTags can have a high cost

Most cloud platforms charge clients based on the number of time series stored.

When exporting a metric, every combination of kept-tag values becomes a distinct time series in Prometheus. While this granularity can help test analysis, it will incur high costs with thousands of time series.

For example, if you add keepTags: ["name"] on http_* metrics, and your load test calls many dynamic URLs, the number of produced time series can build up very quickly. Refer to URL Grouping for how to reduce the value count for a name tag.

k6 recommends exporting only tags that are necessary and don't have many distinct values.

Read more: Counting custom metrics in Datadog documentation