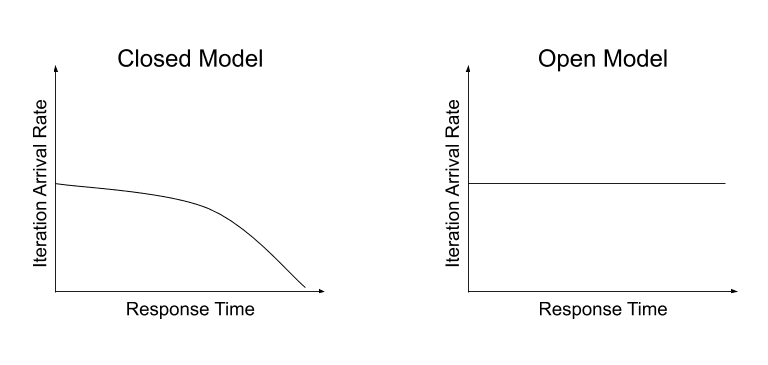

Different k6 executors have different ways of scheduling VUs. Some executors use the closed model, while the arrival-rate executors use the open model.

In short, in the closed model, VU iterations start only when the last iteration finishes. In the open model, on the other hand, VUs arrive independently of iteration completion. Different models suit different test aims.

Closed Model

In a closed model, the execution time of each iteration dictates the number of iterations executed in your test. The next iteration doesn't start until the previous one finishes.

Thus, in a closed model, the start or arrival rate of new VU iterations is tightly coupled with the iteration duration (that is, time from start to finish of the VU's exec function, by default the export default function):

Running this script would result in something like:

Drawbacks of using the closed model

When the duration of the VU iteration is tightly coupled to the start of new VU iterations, the target system's response time can influence the throughput of the test. Slower response times means longer iterations and a lower arrival rate of new iterations―and vice versa for faster response times. In some testing literature, this problem is known as coordinated omission.

In other words, when the target system is stressed and starts to respond more slowly, a closed model load test will wait, resulting in increased iteration durations and a tapering off of the arrival rate of new VU iterations.

This effect is not ideal when the goal is to simulate a certain arrival rate of new VUs, or more generally throughput (e.g. requests per second).

Open model

Compared to the closed model, the open model decouples VU iterations from the iteration duration. The response times of the target system no longer influence the load on the target system.

To fix this problem of coordination, you can use an open model, which decouples the start of new VU iterations from the iteration duration. This reduces the influence of the target system's response time.

k6 implements the open model with two arrival rate executors: constant-arrival-rate and ramping-arrival-rate:

Running this script would result in something like: