This post presents how xk6-disruptor, a k6 extension for fault injection, can be used to improve the reliability of applications by “shifting left” fault injection testing.

The importance of shifting-left fault injection testing

After an incident affects the service levels of an application, development teams are frequently tasked with finding measures to mitigate the effects of similar situations. Teams then need to conduct tests to reproduce the disruptions experienced by the application – for example, high latency, connection drops or failed requests to services it depends on – in order to identify the causes of the application’s inability to cope with the incident and also to verify the effectiveness of proposed solutions.

Depending on the complexity of the root causes of the incident – node failure, network congestion, or a combination of environment conditions such as other workloads – it may be difficult for the development teams to reproduce it. Sometimes the assistance of other teams (reliability engineering, platform engineering, DevOps) is required in order to do so. In other cases the incident cannot be reproduced in a reliable way and the “testing” occurs when another incident happens.

xk6-disruptor is a k6 extension that aims to help teams in testing the reliability of their applications early in the development cycle. It offers an API for injecting faults in their k6 test scripts facilitating the reuse of existing tests to generate load.

This API is based on disruptors that affect specific targets by injecting different types of faults. Currently disruptors exist for Pods and Services, both allowing the injection of faults in HTTP requests, but other disruptors will be introduced in the future as well as additional types of faults for the existing disruptors.

attentionxk6-disruptor is intended for systems running in Kubernetes. Other platforms are not supported at this time.

In the remainder of this post we will explore the use of xk6-disruptor in the context of a case study using a fictitious incident in a demo application, introducing the API and some practical aspects of conducting fault injection tests in a microservices application.

The Case study: a fictitious but all too familiar incident

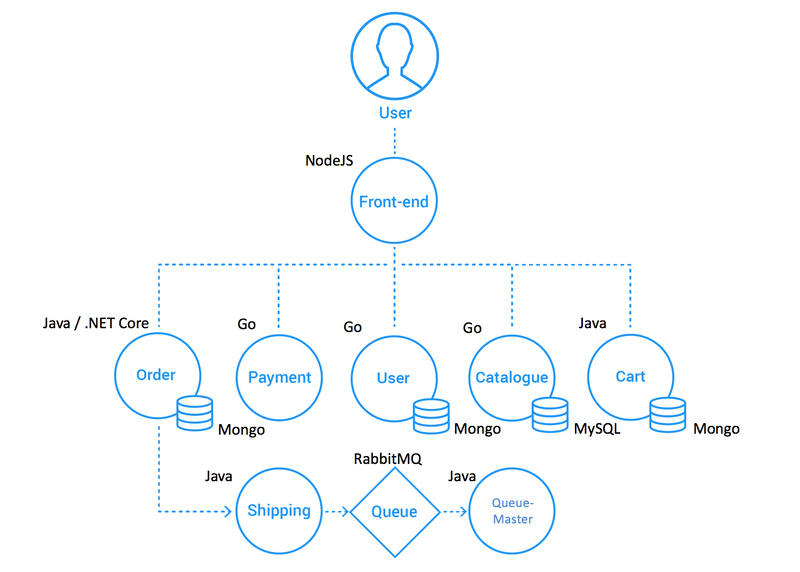

To better understand how development teams can use xk6-disruptor, let’s consider a fictional incident involving the Socks Shop demo application. This application implements a fully functional e-Commerce site that allows users to register, browse the catalog, and buy items.It follows a polyglot microservices-based architecture. Each microservice has its own API that can be accessed directly by means of its corresponding Kubernetes service. The front-end service works as a backend for the web interface but also exposes the APIs of other services, working as a kind of API gateway.

Let's consider a hypothetical incident triggered by long running queries in the Catalogue service’s database that caused delays in the requests (up to 100ms over the normal response time) and the eventual exhaustion of database sessions that resulted in HTTP 500 errors. The Catalogue service team will investigate the incident to address the root cause. Meanwhile, the Front-end team wants to validate how resilient its service is to this kind of disruptions.

Preparing for the tests

Before getting into the test, it is important to ensure that you have your environment properly set up. This tutorial assumes that you are familiar with Kubernetes concepts such as deploying applications and exposing them using services. This tutorial also assumes you have access to a Kubernetes cluster on which you can deploy the demo application.

noteIf you do not have a test cluster for running this demo, you can install a local test cluster using Kind or Minikube.

Setup test environment

xk6-disruptor is a k6 extension. To use it in a k6 test script, it is necessary to use a custom build of k6 that includes it. Refer to the Installation Guide for instructions on how to get this custom build.

xk6-disruptor needs to interact with the Kubernetes cluster on which the application is running. In order to do so, you must have the credentials to access the cluster in a kubeconfig file. Ensure this file is pointed to by the KUBECONFIG environment variable or it is located at the default location (in Linux based systems, it is $HOME/.kube/config). For this tutorial you will also need kubectl installed in your test environment.

Deploying the Socks Shop application

The Socks Shop application can be deployed on a Kubernetes cluster applying the manifest with all the required resources using the following command:

(some output omitted for brevity)

noteNotice that the application is deployed in the sock-shop namespace.

Exposing the application to the tests

Once the deployment is completed, a Kubernetes service is defined for each microservice of the application:

As you can see in the EXTERNAL-IP column of the output, these services do not have an IP address accessible from outside the cluster. However, you will need to access the front-end service from the machine where the test will run. Refer to the xk6-disruptor’s get started guide for hints on how to expose a service, but specific details to accomplish this depends on the setup of your cluster. The remainder of this tutorial assumes that the front-end service is accessible at an IP address defined in the environment variable SVC_IP. The following command can be used to test this access:

The test

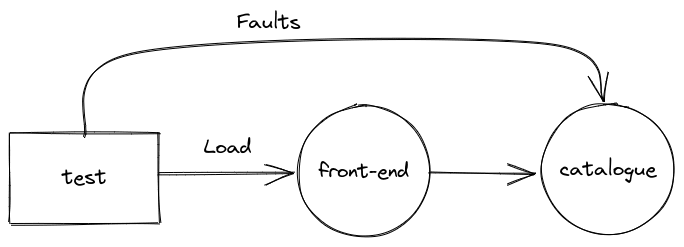

The objective of the test is to validate the behavior of the front-end service when the requests to the catalogue service suffer the same disruptions observed in the incident: increased latency and errors when accessing the catalogue service. The test will therefore inject faults in the requests from the front-end service to the catalogue service while executing requests to the front-end service, as described in the figure:

The next sections explain each part of the test. You can find the source code here. Download it to your working directory.

Init

The init code imports the ServiceDisruptor class from the xk6-disruptor extension and the http module which is used for making http requests. The init code also defines the product keys that will be used when making requests to the front-end service.

Scenarios

The test defines three scenarios. The base scenario applies the test load to the front-end service for 30s and is used to set a baseline. The inject scenario is executed after 30 seconds of the test run. It invokes the injectFaults function one time, injecting faults in the HTTP requests of the catalogue service. The fault scenario is also executed after 30 seconds of the test run, reproducing the same workload as the base scenario but now under the effect of the faults introduced by the inject scenario.

attentionNotice the definition of the inject scenario executes only one iteration because the injectFaults function must be called only once.

The requestProduct function makes an API request for a random product in the catalog using the front-end service’s external IP passed in the SVC_IP environment variable. The front-end service API will always return a status code 200 regardless if the request was successful or not, so we need to add a check on whether the response’s body contains an error attribute.

The injectFault function creates a ServiceDisruptor for the catalogue service. The disruptor works by installing an agent in the Pods that back this service. These agents will intercept the incoming requests and inject faults. The test defines a fault that will add a delay of 100ms to each request and return a code 500 in 10% of them. The fault definition also specifies the content of the response body when an error is injected. This fault is injected to the service for a duration of 30 seconds.

noteThe fault definition excludes the /health path to avoid the pods backing the front-end service to be restarted by Kubernetes due to the liveness probe failing. The endpoint for this probe is defined in the service’s deployment manifest.

Reporting metrics

To facilitate the comparison of the results between the base and fault scenarios, we define thresholds for the http_req_duration and the checks metrics:

Execution

Let's execute the test and compare the results of the base and fault scenarios.

We can see that for the base scenario 100% of requests pass the check and the average http_req_duration is 5.6ms. For the fault scenario only 87.5% of requests pass the check (therefore 12.5% fail, a ratio close to the 10% injected) and the average http_req_duration is 106.52ms, reflecting the additional 100ms injected by the ServiceDisruptor.

Conclusions

In this tutorial we have shown how xk6-disruptor can be used for testing applications under disruptive conditions. We have seen how with a few lines of code any load test can be be transformed in a fault injection test.

Future plans

As we mentioned at the beginning of this post, presently xk6-disruptor has a limited set of disruptors and faults. However, we have plans for developing additional disruptors and also additional faults for existing disruptors. Take a look at the project's road-map.

Get involved

If you're interested in contributing, you can find xk6-disruptor on GitHub. We are looking forward to receiving your feedback!