This article describes how to build an external command-and-control UI for exploratory load testing with k6. The UI is written in Python and features some awesome ASCII graphics using the Python curses library.

The resulting little Python app actually implements a feature that, at the time of writing, you cannot get anywhere else: the ability to dynamically alter the load level of a running load test!

This lets you do "exploratory" load testing with k6, where you start your load test at some fixed load level and then adjust the load up or down during the test, depending on what you see happening on the target system. Just press "+" or "-" on your keyboard to alter the load level! You can also pause the whole test - temporarily halting all traffic - with the push of a button. Cool, isn't it?

The Python code can be found at https://github.com/ragnarlonn/k6control if you want to contribute. Feel free also to steal things and build your own UI of course!

Note that this article assumes you're already familiar with k6 and that you have some software development skillz (even better if you've used Python a bit).

The k6 REST API

As described in the previous article, k6 will by default fire up an API server on TCP port 6565, where it exposes the command-and-control API. Currently there are only a small number of things you can do via this API, but hopefully in the future it will be extended with more end points. Here is a description of the API, in full (reddish text is server response):

GET /v1/status

Get status data for the running test:

PATCH /v1/status

Update status data for the running test (here we set paused=false, and the server returns the newly updated status):

localhost:6565/v1/status

GET /v1/metrics

Get all metrics:

GET /v1/metrics/

Get a specific metric:

GET /v1/groups

Get all defined groups:

GET /v1/groups/

Get a specific group:

That’s really it! You use the five GET end points to get live information from a running test, and you use the PATCH end point to control the test: change number of VUs and pause/resume the test (currently, there is no way to abort the test using the API).

The Python app

So now we know what the API looks like, and what it can do. Time to write some code to actually do it!

Some general information before we dive in:

- I'm using the Python requests library to issue HTTP requests to the k6 API server<

- The Python curses library enables the jaw-dropping graphix

- The code is following every single PEP directive for coding standards... Not! Maybe some of them. Perhaps. Better not use this code to teach people Python.

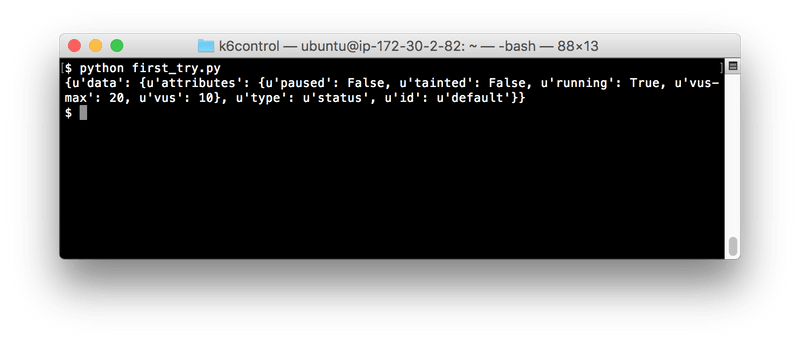

The first try

So. Where to start? The simplest thing we can do is probably GETting and displaying the overall status of a running load test, using the GET /v1/status end point. Let's try that - here is the Python code:

That should do it. Then we fire up a k6 instance. I'm using a simple k6 test script that looks like this:

Tip: sleeping a random amount of time between iterations is often useful as it means VUs will not operate in sync. You usually don't want all your VUs to issue requests at the exact same time

So, first we execute k6 like this:

Tip 2: use the --linger command-line flag to tell k6 not to exit after the test has finished, and to keep the API server available all the time

And after starting k6 we run our Python program in another terminal window:

Well, that worked. The app fetched some status info from the running k6 instance and printed it onscreen.

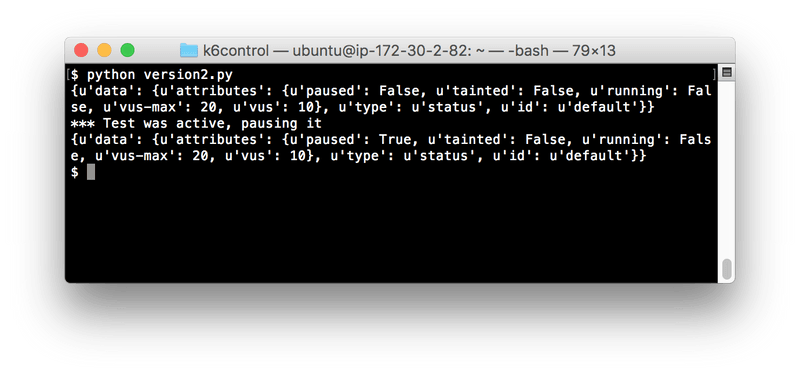

Version 2

So let's try changing the state of the test with the app. First we read the status, then we use the PATCH /v1/status end point to change the "paused" state of the app, then we read the status again to verify that we managed to pause the test.

This what happens when we run the program:

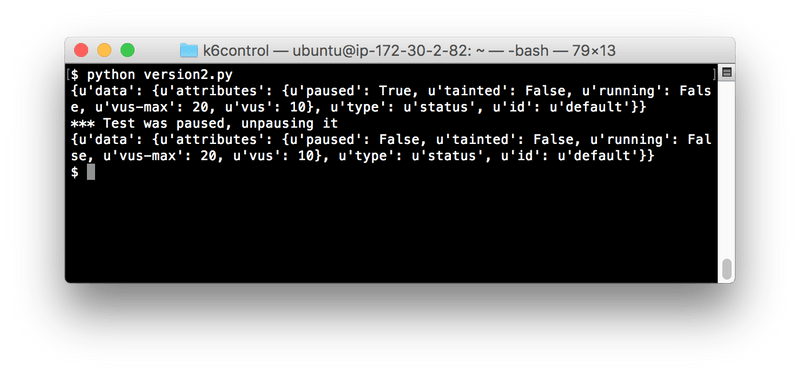

And if the k6 test was still running you'll note that execution (load generation) will have stopped at this point. We can run our program again to make k6 resume the load test:

Increase & decrease VU level

So now that we know how to change the status of a running test we of course want to be able to manipulate the load level of the test. Let's create a small app for that:

Live statistics for a test

Now we want to follow a test in real time, keeping track of how many requests/second etc. k6 is generating. We'll use the GET /v1/metrics end point to retrieve all metrics from k6. If we just want a single metric (like the "http_reqs" metric - a counter keeping track of how many HTTP requests k6 has made), we can GET /v1/metrics/http_reqs. We'll start by using the latter end point, for clarity (less response data to sift through).

But something's wrong with this data! I.e. it doesn't work for our purpose: we want to show the current HTTP requests/second rate, but this metric provides a "rate" value that is calculated for the whole load test. That means that if we, for example run a load test that generates 10 HTTP requests/second for 10 minutes and then suddenly there is a spike to 100 requests/second, this rate metric would take maybe 30 seconds to change visibly, and even then it would not show the actual current 100 req/s rate. That's not much fun. We want our UI app to show traffic spikes as they happen, when they happen.

k6 does not provide point-in-time rate values for its counter metrics (maybe that is something that could be implemented in the future?) so we have to calculate those metrics ourselves here. The way to do that is of course to poll k6 for the cumulative counter value, at two different points in time, and then calculate what the rate was between those two points in time. Like this:

Here we can see that the "http_reqs" counter metric changed from 287 to 331 over 5 seconds. That means that k6 generated 331-287=44 requests during those 5 seconds. That is 8.2 requests/second.

Let's write a small Python program that displays live rates for some interesting k6 counter metrics:

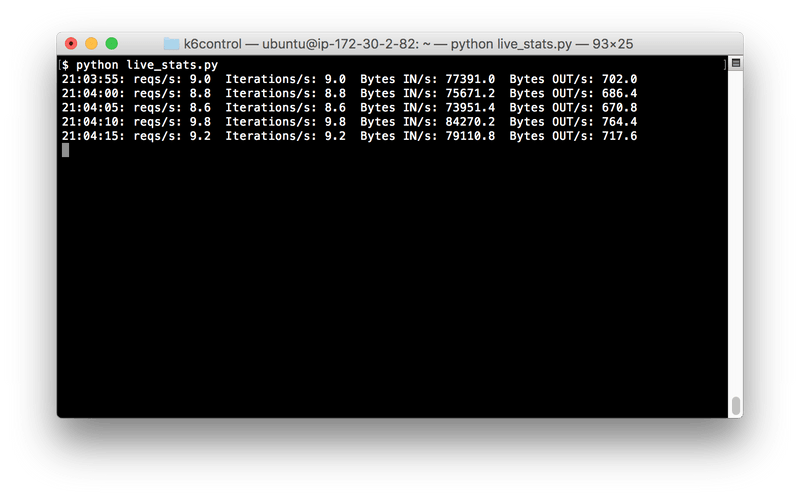

This looks better. Here is the output:

The numbers are updated live every 5 seconds, and shows the rates for the past 5 second period only, so it will catch a sudden change in traffic.

But where are the graphix!?

A command-and-control UI for a load testing app would be boring if it didn't have the ability to draw some kind of chart. And featured single-key commands - It has to feel a bit more like a game! And it needs retro-terminal-inspired colors!

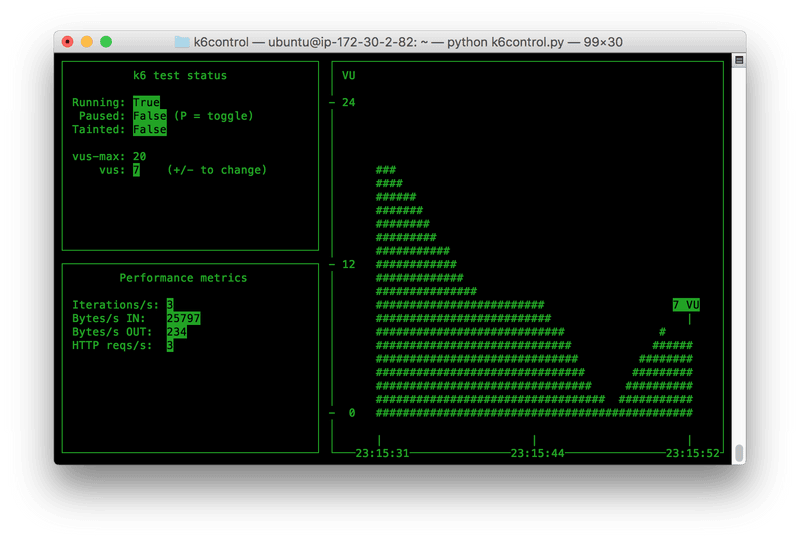

So we'll do a bit of refactoring, add a couple of features and also introduce curses into the mix. Then we get...THIS:

Oops, suddenly we're at 300 lines of code - That went fast. I guess I could have continued describing each step along the way here, but the blog article would have become more or less a (not so fantastic) tutorial for the curses library. I think instead that I'll quickly describe the code and direct you to the Gihub repo.

If you just want to try out this nifty little app, you can just go to the releases page and download a ZIP file with the Python code. Unzip it, then run python k6control.py.

k6control

Yeah, that's what I named it. Nothing wrong with my imagination, as you can see. But anyway, the code is included at the end of the article also, in case you happen to have MAXINT browser windows open and can't open a single one more.

A quick description of what the code does:

- main() gets executed

- main() parses command-line options, then executes the run() function via curses.wrapper(), which helps resetting the terminal to a sane/usable state in case the program should exit via an uncaught exception, or something similar

- run() is the actual "main" function here. It does a lot of things:

- It creates a "Communicator" object, which will fetch data from the running k6 instance

- It sets some global curses options

- It makes the Communicator object fetch some initial data from the running k6 instance

- It creates three curses windows: one "status window", one "vu window" and one "metrics window"

- Then it enters the run loop, where it looks for keyboard input or terminal resize events, and acts on them

These are the three curses-defined, on-screen windows:

- The Status window on the upper left contains general information about the test - whether it is running or not (if it is not, it means the test has finished), if it is paused or not (a paused test can still be "running"), how many active VUs there are currently, and things like that. Basically all the data you get from a call to the /v1/status API end point

- The VU window takes up the whole right side of the terminal. It displays a graph of the historical VU levels during the test. Note that the graph has a fixed & limited time range that it can display, and it updates continuously so if k6control has been running a while you will not be able to see the whole history of the load test in the graph.

- The Metric window is on the left side, below the Status window. It shows the current state of a few performance metrics: bytes sent and received per second, HTTP requests made per second, and script iterations per second.

There is one class instantiated as an object for each window. The window objects contain functionality for populating the windows with information and for resizing them in case the terminal window is resized.

There is also one class (Communicator) that handles all the data fetching from the running k6 instance. It saves all old data it has fetched, with time stamps. This allows the program to calculate delta values for several counter metrics - i.e. calculate how much a metric has changed since last time it was fetched. This means we can calculate things like current number of HTTP requests/second, etc.

The Communicator object should probably have handled the outgoing PATCH requests also, which are now sent from the run() function directly. Ah well, future improvements.

Command-line options

Run the program with the -h/--help flag to see the built-in help text

Basically, you can do these three things:

Specify where the running k6 instance is:

Change how frequently data should be fetched from the k6 instance:

How many VUs to be added/removed with +/- controls:

Build something!

This whole project, and the article, was meant to demonstrate how easy it is to integrate/interface with k6, in the hope that someone reading this gets inspired to build something themselves.

If you like the app and want to contribute, I'd absolutely accept pull requests (provided they are reasonably sane), but like I wrote earlier you're also very welcome to take the code, or parts of it, and use on your own. If you build something cool, we in the k6 community would love to hear about it - feel free to announce it in e.g. the k6 Slack channels.