This post was originally written by Simon Stratton in the SafeBear blog.

Quick Links

- Exploratory Testing

- Layout Testing

- Test Automation Frameworks

- Performance Testing

- API Testing

- Microservices

- Test Data

- Test Environments

- Reporting

- BDD

- Learning

- Unit Testing

- Popular Methodologies

At the end of the year, I like to look back at what new discoveries I've made and lessons I've learnt as a QA engineer. 2019 has proved to be a bonanza year, with some startling changes in direction for Test and with new technologies and methodologies making a break into the mainstream. There's been:

More progress with AI in tooling and reporting, with an exciting new library that allows you to build your own AI-powered Test Automation framework.

The revolutionary ability to control your Test Environment within your tests.

New Testing methodologies that I'd never encountered before this year (Layout Testing, Mutation Testing, new advances in Shift-Right Testing).

Surprising mergers and acquisitions.

RPAs becoming massively popular and then disappeared again, leaving some interesting open-source options. And much much more. I'll dive right in with an oft-overlooked area that surprised me the most.

Exploratory Testing

One of the most under-appreciated and difficult types of Testing is Exploratory Testing. It's incredibly hard to identify how much is too much or too little, and what the scope should be for each feature release, update or bug fix. However, with the advent of Test Automation replacing Regression Testing, it's a discipline that Testers are starting to (rightfully) pay more time and attention to.

In the past, I've mapped user journeys through an application using Test Cases, and then used these as the bases for Exploratory and Negative Testing. But these are hard to maintain, and it is difficult to describe different flows without creating many Test Cases with duplicated steps.

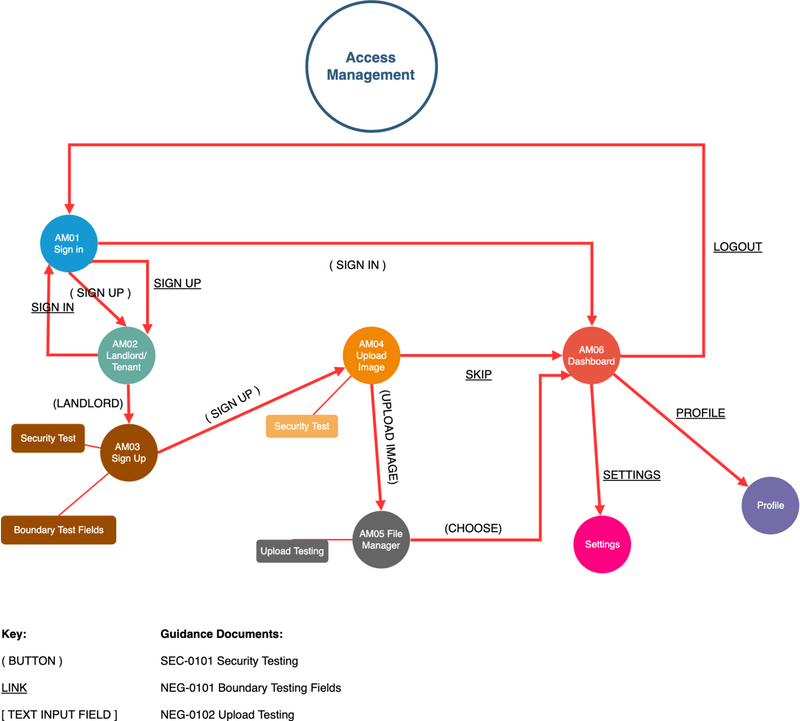

Recently I came across this blog post by Alan Richardson (the Evil Tester) which describes how to model the flow of user interactions through your application for Exploratory Testing. It's revolutionised my approach. For every new application I now test, I map the flow by hand (as described in the blog post). And once a full run-through is complete, I break the flows down into Feature areas and document them on draw.io.

These have a very simple key. If I click on a button, it's marked in parentheses (), links are underlined and text boxes that trigger a change in the page are contained in square brackets []. Each new page is a node with a unique ID and notes on what Exploratory Tests to perform are linked to each node. The paths are represented as arrows, which are initially red. When they've been tested, they're turned green. draw.io allows you to save these diagrams to GitHub, making it easy to version them along with the code. I've included an example below.

You can find the XML for the diagram on my GitHub.

As you can see, this makes it easy to quickly identify what areas of the application has changed with a new release and to scope accordingly. Any new flow will be added with red arrows until it's tested.

I have found this approach to be useful to me, but don't take it as gospel. Try drawing the Application Flows by hand first and find an approach that suits your Exploratory Testing style.

Chrome Extensions

There are also many Chrome extensions that are useful for Exploratory Testing. I've included a few below:

Screencastify is useful for recording your Exploratory Testing session, just incase you forget what you've done to break the system.

Bug Magnet is an oldie, but a goodie. It automates your negative testing of elements on the screen.

CounterString and Useful Snippets are both excellent offerings from the Evil Tester and well worth installing in your browser.

Layout Testing

This is something that was new to me, but is becoming more and more important as PWAs (Progressive Web Apps) start to become the norm (Progressive Web Apps are websites that look and feel like an app when viewed on your mobile device). If you want to look into this, I'd recommend the Galen Framework which can easily be plugged into your project and CI.

If you want to practice using it, GoThinkster have the same real-world web app built in a huge variety of frameworks for you to play on. I really like HNPWA also.

Test Automation Frameworks

Cypress.io is still the best Test Automation framework for Web Apps out there in terms of usability and design. But it still doesn't have cross-browser abilities, or the ability to jump domains, making it unusable for a lot of enterprise applications with users who don't use Chrome. I've also had a lot of issues trying to get it to work with TypeScript.

TestCafe is still underrated and getting better with every release.

Selenium is still continuing its slow evolution to Selenium 4.0 and to hopefully a simpler and more stable application with enhanced logging capabilities. Some user-friendly documentation has even been produced, although clicking on arrows to scroll through the chapters like a presentation is infuriating for some.

Appium will also be evolving in the 2.0 version. No longer a bloated beast that installs everything you ever might need, the new version will be a modular, light-weight interface that can be tailoured to your needs.

Test Project has become the first free, open-source, cloud automation framework and is worth a look because of this.

WebDriverIO stayed on the bleeding edge by becoming the first framework (that I know of) to include support for querying the Shadow DOM (now others, like TestCafe can also do this). The framework also now allows you to use the Chrome DevTools Protocol to automate the browser (like Puppeter) instead of the WebDriver Protocol. It also now includes Visual Regression Testing, which is becoming a must for a lot of Test Frameworks.

Wrappers for Test Frameworks

Smashtest is an interesting project for making human-readable automated test cases on top of Selenium. I see a lot of companies using Cucumber just for this reason. This may be a more suitable alternative.

CodeceptJS I love the concept of this framework, although I've yet to use it in anger. Again, it is designed to make simple, human-readable automated tests, but the real genius lies in the plug-and-play aspect of the underlying Test Framework. This wrapper can sit above Puppeteer, WebDriver, Protractor, Test Cafe etc, abstracting away all the complexity and allowing you to easily switch between frameworks if you decide that one isn't working for you. There're also helpers for Appium and Detox for mobile testing, and for ResembleJS for Visual Testing. This has the potential to become my go-to framework.

Other News

Applitools have been running a world-wide 'Rockstar'

Hackathon to prove once-and-for-all that Visual Testing is faster and more reliable than traditional Test Automation tools such as Selenium. Another one is scheduled for Q1 next year. Percy and

Ghost Inspector are interesting competitors to Applitools that are worth a look. ### AI in Test Automation Appium are investigating AI in Test Automation with the folks at Test.ai although it's in early stages. If you're brave enough to build your own Test Automation tool that incorporates AI, then AGENT is an open-source library that has done the heavy-lifting of the 'machine learning' part for you. It's certainly worth a look.

RPAs (Robotic Process Automation)

For those who are interested in RPAs, UI Vision (previously Kantu) and Automagica are interesting open-source options. Especially as UI Vision supports OCR (Optical Character Recognition).

Performance Testing

K6 is still, in my opinion, far-and-away the best tool to use for integrating performance testing into your project and CI. It talks to your monitoring systems so well, and it's so lightweight, you can even use it to keep an eye on your Live application.

JMeter is powerful, but slow, bulky and still feels awkward, dated and inaccessable to other members of the SCRUM team.

Gatling has had a nice makeover with some great branding but it's nowhere near as flexible and fast as K6 (which is written in Go while Gatling is written in Scala). You'll soon find yourself spending money on their Enterprise option as your scenarios get more complex. However, if you do have awkward protocols and can't use K6, then I'd recommend checking out artillery.

API Testing

Postman have released an new tool called the API Visualizer I haven't had a chance to play with it yet, but it seems to be a flexible tool to visually map the data you get from your API in any graph format you can think of.

IBM have released a free(ish) cloud API testing tool with the snappy name of API Connect test and monitor. It feels a little over-engineered, and light on features compared to Postman.

Microservices

Pact is still pretty much the leader of the pack when it comes to Consumer-Driven Contract Testing. However be aware that every implementation comes with a maintenance overhead. HLTech have created a wrapper called Judge Dredd that may help counter this issue.

Spring Cloud Contract is also worth a look. It has fantastic documentation, but not all the functionality of PACT just yet.

Test Data

Yes, I have no idea what this means either. But I really want to know.

Faker is still the king when it comes to generating useable and unique test data for all your Test Automation needs. I've linked to the JS version here, but it's been ported to most languages.

Test Environments

Test Containers is one of the biggest revolutions in Test Environments to come out of 2019. It enables you to manage your dockerized test environments from within your test.

This allows you to only bring up applications or environments as and when they're needed. Hugely useful for integration testing. It has also been ported to multiple languages.

Reporting

ReportPortal is the king of all Test Automation reporting (and is also, amazingly, open-source). You do need to host it yourself (there's a decent guide here for hosting it in the cloud), but it's definitely worth the effort.

Behaviour-Driven Development (BDD)

Cucumber was aquired by Smartbear this year and their first product launch after the aquisition was Cucumber for Jira, meeting a much-need demand from many enterprise users. Finally companies can sync their Feature Files with their Jira tickets if they wish.

Learning

The Test Automation University was launched by Applitools. There are some great courses on there, but you might need to dig around a bit to find them.

Unit Testing

Mutation Testing has become a 'must have' for all dev teams out there. It's truly a wonderful way to discover how robust your Unit Test framework, and your code, really is. Snapshot Testing was introduced by Jest and is also becoming essential as the UX and design of the website becomes all important. For some companies it's the only way a user will engage with their product.

Popular Methodologies

'Shift-left Testing' and 'TDD' have been around for a while now, but this year, there's been more focus on what application testing is being performed in the DevOps and Release project phases. Called 'Shift-Right Testing', these encompass a wide range of techniques that have increased in popularity now that Serverless and Cloud technologies make them more viable.

I've summarised a few below. Some you may recognise, some are relatively new.

Code Instrumentation: The developers include code in the app to test the performance of the live application. I.e. For Debugging (Code Logic), Tracing (Code Exec) and writing to Performance counters and Event logs.

Real User Monitoring: A type of Performance monitoring that captures and analyzes each transaction by users of a website or application. Key metrics like load time and transaction paths. For example:

A developer might notice that during prime time, traffic spikes cause an increase in timeouts, resulting in frustrated customers and lower Google rankings.

An end-user portal like a bank software system may use it to spot intermittent issues, like login failures that only occur under specific, rare conditions.

An app developer may use it to highlight failures in different platforms that don’t show up during pre-deployment testing.

Synthetic User Monitoring: A form of active web monitoring that involves deploying behavioral scripts in a web browser to simulate the path a customer or end-user takes through a website (similar to Test Automation 'happy paths'). This is used as a transaction test for benchmarking your application before launch, comparing releases and comparing performance against competitors

Dark Launching: Is the process of releasing production-ready features to a subset of your users prior to a full release. This enables you to decouple deployment from release, get real user feedback, test for bugs, and assess infrastructure performance.

Alpha/Beta Testing: Lets early adopters find the bugs that are important to them.

Injecting Chaos The Chaos Monkey principle. Systematically break (and restore) aspects of the live environment and ensure that the failure was caught by your monitoring and recovery systems.

Dogfooding: When an organization uses its own product. This can be a way for an organization to test its products in real-world usage before the launch of a new release.

Feature Flags: Features are pushed live instantaneously, but can be switched on and off. Also called Feature Toggle. Available in Code Control software such as Git.

Summary

But enough of my blathering, what do you think? Did I get it horribly wrong and recommend a terrible tool, or miss a tool of the list that would beat all the others hands-down? Are my methodologies actually so 2018 and I've completely missed the new trendy 2019 ones? Let me know your thoughts in the comments below.

(Side Note) It's true that I've been selective in my list. I've tried to focus on open-source projects where possible, in order to promote the good work that they're doing. however that's not to say that there isn't many useful commercial tools out there.